Digital Credentials for Agentic Systems

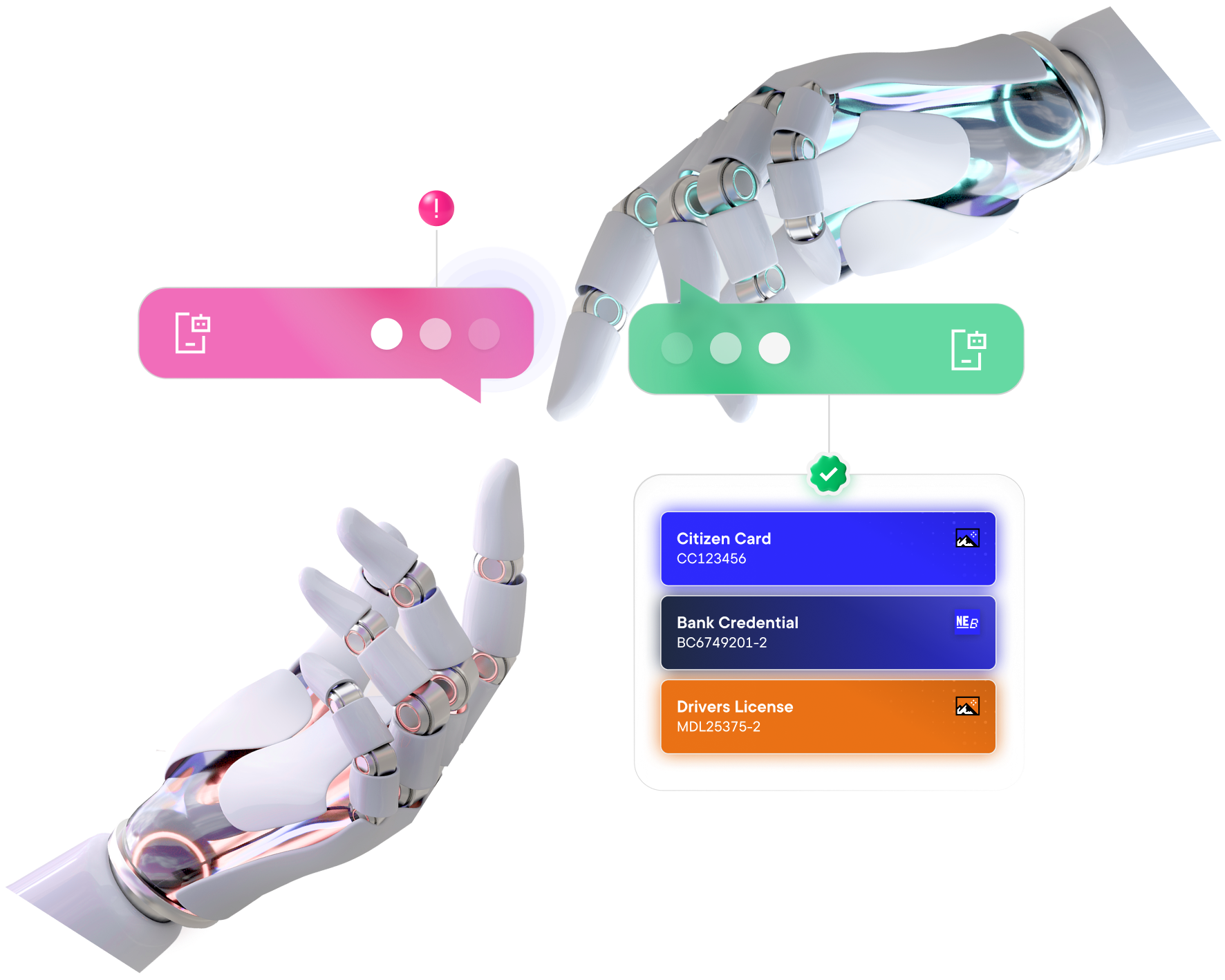

When software can act, trust has to scale

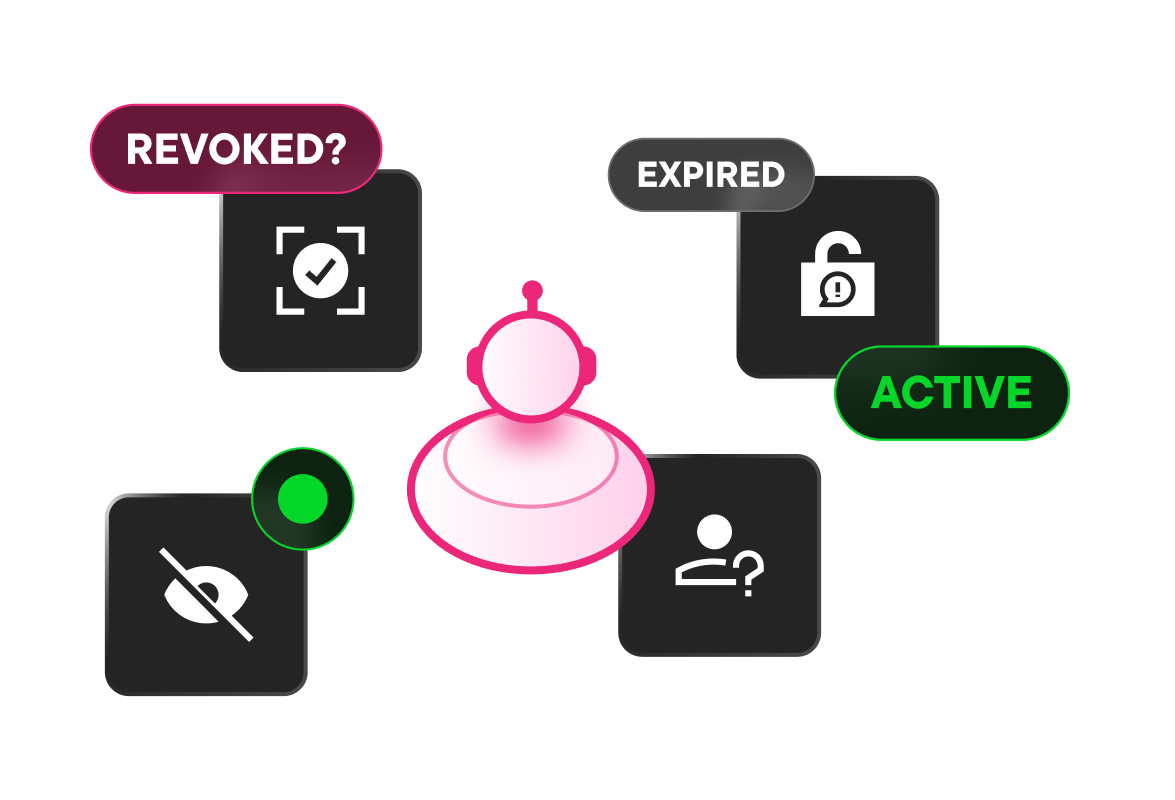

Agentic systems are moving from advising humans to acting on their behalf. MATTR Labs is exploring what this shift means for trust, authorisation, and accountability.

MATTR Labs has released a series of articles and hosted a webinar at the conclusion of the series that examines what changes when software is authorised to act — and what that means for real-world systems.